Last updated on May 15th, 2026 at 02:30 pm

A web crawler, also deemed as a spider, is a bot operated by search engines like Bing and Google to index website content all around the Internet so that the said websites appear in search engine results.

This software program is operated by scanning sites, reading the site content in order to generate the entries for the search engine index. Website crawlers are used and operated by all search engines and are typically known to work on content submitted by site owners themselves.

A website is usually optimized by applying a search algorithm to the data found by web crawlers and doesn’t have free reign. As per the Standard for Robot Exclusion (SRE) web crawlers are dictated by the “rules of politeness”. Due to these prerequisites, a crawler can source information from the respective server to determine the files that may or may not be read, and which files to exclude from being submitted into the search engine index. Crawlers abiding by the SRE cannot bypass firewalls, which were implemented to protect the privacy rights of the site owners.

Another specialized algorithm set by the SRE enables the web crawler to create search strings of keywords and operators, in the order built onto the search engine index of websites and pages to aim in future search results.

Benefits of Using a Website Crawler?

A website crawler goes through sites for a search query and develops a database of search strings, which helps a user find what they are looking for in the SERP (Search Engine Results Page) in a matter of minutes. These search strings are mainly keywords and operators that happen to be the search commands, which are used and are archived per IP address usually.

This database is further uploaded into the search engine index for an information update, which aids in the accommodation of new sites and currently updated site pages to ensure equal and relevant chances.

Seven Things That Make a Web Crawler Worth it

-

It is scalable– A crawler’s performance curve will be subject to change, the more a business grows. A good site crawler should not slow you down in the process and be open to expansion.

-

It is transparent– There should not be any unwanted hidden cost for your web crawler and you should know what you are paying exactly.

-

It is reliable– A site that stays static stays dead. It is prone to undergo changes regarding updating, adding, and redesigning the layout. To monitor said changes, and efficiently update its database is a characteristic feature of a good web crawler.

-

Anti-crawler mechanisms– All good web crawlers are required to function within the limits defined in the SRE to protect the privacy of the site.

-

Data delivery– If you want to view a particular format of the collected information of the website crawler, go for one that is capable of viewing multiple formats.

-

Support– Make sure the website crawler has a good support system that frees them from needless stress when things might go downhill.

-

Data quality– Make sure that the software you ultimately choose is capable of clearing up all unstructured data and can present it to you in a legible manner.

How are SEO and Web Crawlers Related?

Web crawlers essentially go through your website and check whether the web page meets all the metrics required so that a search query can be answered. These metrics would include proper structure, hyperlinks, keyword optimization, and more.

If the tests are passed, Google will index your website as one of the top results. Hence, it is important that your web page allows crawlers to go through your website. Certain things that can block crawlers are broken links, poor keyword optimization, etc. SEO aids web crawlers and helps your site to get indexed.

Conclusion

Site crawlers have been prevalent since the early 90s ever since the age of the internet. New website crawlers are popping up daily, making the market ever-expanding. Therefore, it is tough competition, and developing a new website crawler is a challenging feat.

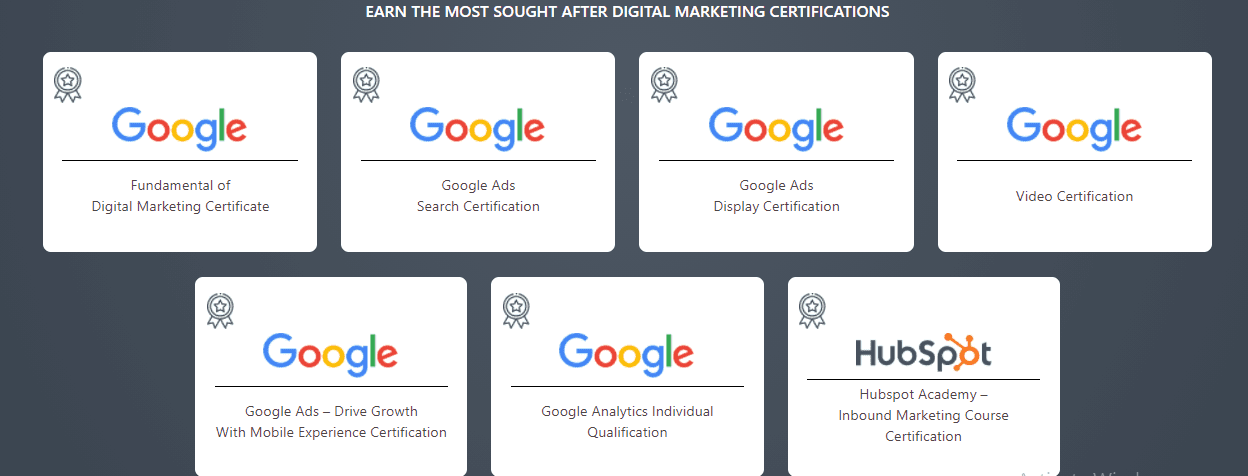

However, it is interesting to learn nonetheless and can be easily mastered with any top-tier SEO course online or a Digital Marketing course.

A

A