Last updated on May 15th, 2026 at 02:58 pm

Collecting relevant data from the billions of pages online and scanning their content is an impossible task, given the amount of data generated daily. Web scraping thereby saves the day in these situations. Whether listing products or collecting information for research, web extraction software is indispensable.

Web scraping, also known as web extraction, is a method for gathering data in large quantities before formatting it from unstructured to structured. In a time when the number of websites is constantly proliferating, it is quite difficult to collect appropriate data. This challenging operation is made simpler via web scraping. Understanding web scraping is essential to build a successful career in data science because it entails data acquisition.

Learn more about the software, its features, functions and other pertinent information with this article.

What is web scraping?

When a large amount of information is obtained from a reliable website, its structure will be in HTML format, which must be converted into structured data either in a database or a spreadsheet. Web scraping is the process of collecting and converting raw data from web pages.

The methods include using specific APIs and online tools or even creating unique web scraping programs. Web pages are made using text-based markup languages (HTML and XHTML), typically containing a substantial quantity of useful text-based information. Most websites are developed with end users and not with machine usage in mind. As a result, it is now simpler to scrape online pages thanks to the development of dedicated tools and software. Some websites provide direct bulk data extraction, while others do not. Web scraping is useful in these circumstances since it extracts the data via an API or custom scraping code.

A scraper and a crawler are necessary for the data extraction procedure. By clicking on connections to other websites, the AI-based “crawler” searches the web for the exact material it requires. On the other hand, the scraper is a special tool used for data extraction from websites. The scraper’s architecture may vary significantly depending on the project’s scale and difficulty in extracting data precisely and effectively.

Sign up for a data analytics certification course to gain in-depth insight into web scraping.

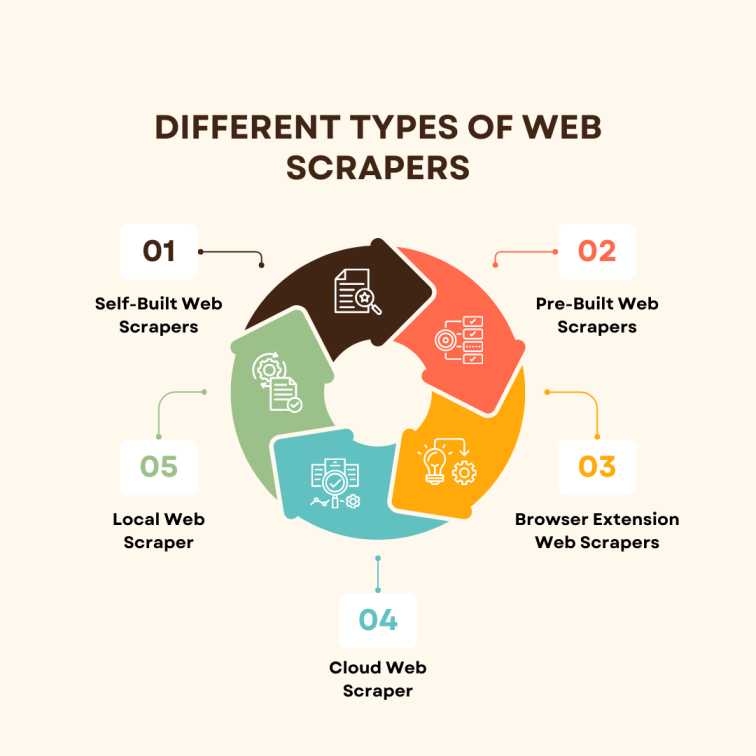

Different Types of Web Scrapers

Depending on which section they fall under, web scrapers are further categorised into five types— locally-built web scrapers, cloud web scrapers, browser extensions web scrapers, pre-built web scrapers, and self-built web scrapers.

To succeed in a career in data analytics, it is essential to understand the different types of web scrapers.

Self-Built Web Scrapers

Self-built web scrapers can be made with less programming experience than other types. As a result, they are not strongly suggested, but they offer a great entry point into the world of data collection.

Pre-Built Web Scrapers

Everybody can easily access this web scraper. They are simple to use, are customisable and can be downloaded freely.

Its customisation ability sets it apart from other web scrapers in several ways.

Browser Extension Web Scrapers

As the name implies, the browser’s extension sets it apart from other browsers. The user finds it simpler to use the extension because of its familiarity with the browser. The browser’s functionality is compromised by limited features. As it is far more sophisticated than the browser and provides a more streamlined working environment, software web scrapers are used to overcome this challenge.

Cloud Web Scraper

Web scrapers operating in the cloud are typically provided by the company where you bought them. The cloud is an off-site server. They spare your computer’s resources so it can concentrate on other tasks because they do not require scraping data from websites.

Local Web Scraper

These scrapers operate by using local resources that are available right from the computer. The device’s speed slows down as a result of using up RAM or the CPU’s energy. Completing tasks becomes laborious as the device’s performance and speed decline.

Techniques Used

Registering for a data science course is the ideal choice for aspiring data scientists to master the techniques used during web scraping. The techniques include the following:

- Copying specific data from a website and pasting it into a spreadsheet manually.

- Using Python, which has demonstrated greater capacity to scrape substantial amounts of data from particular websites. It is highlighted because it can mimic the expressions. Scrapers also make use of a variety of programming languages, including JavaScript and C++.

- An information extraction technique where the user applies machine learning to gather information.

- Utilising semantic markups or metadata, semantic annotation recognition locates and extracts data snippets.

How Does It Work?

In data science training, participants are given a thorough explanation of web scraping. Illustrated below is the step-by-step process of web scraping.

- The initial and most crucial step is requesting access. The web scraper sends an HTTP request to a specific website to gather data from that site.

- When the request is approved, the scraper examines the HTML to decipher and explain the content.

- After going through the HTML structure, the structure’s decoding reveals the information, and the necessary data is then extracted.

- The extracted data is manually entered into a particular spreadsheet as the final step after decoding the HTML structure.

Conclusion

Online scraping is essential in an era where the number of websites is growing rapidly. Data collection has evolved into a crucial tactic for businesses and individuals alike. Accuracy and collection of data are critical for a business’s success, and there are numerous different data collection tools, methods, and procedures. Web scraping continues to be one of the most popular tools and key subjects covered in data analytics courses. By enrolling in the Post Graduate Programme in Data Science and Analytics, the knowledge gained in the field of data science will help establish a career to become a data analyst in future.

Visit the Imarticus Learning website to learn more about the data science certification programme.